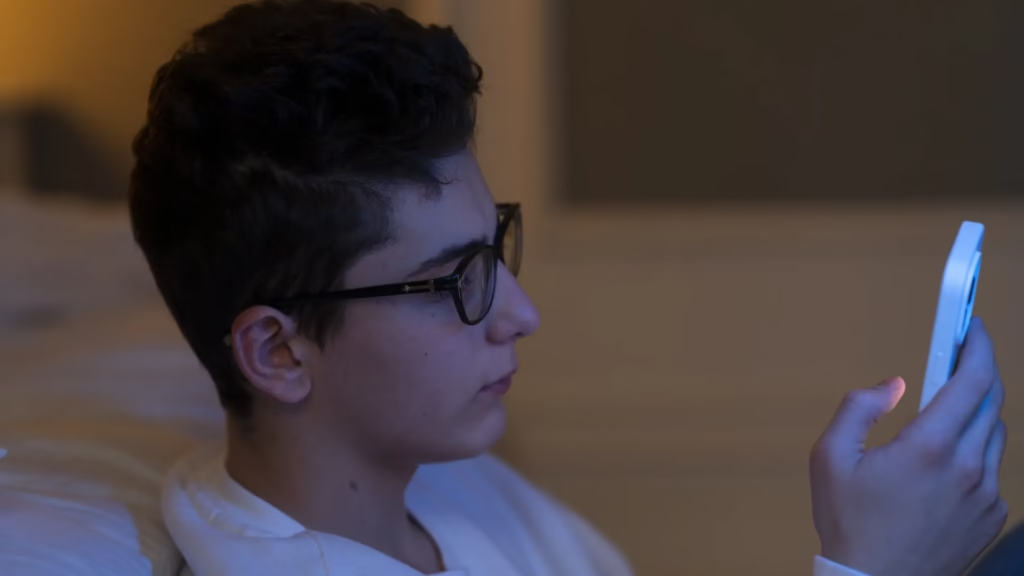

Social networks are filled with exercises that promise to solve your life with four simple questions for an artificial intelligence tool (IA). “Express your life”, “Create the story of my future” or “what will be the day of your dream life”, some slogans on the Internet and attracted thousands of young people. AI is becoming some kind of confidence for young people who come to this technology with any doubt or inconvenience. According to psychologists and experts in this field, due to their age and in the absence of digital literacy, children are at risk of living in a parallel reality and losing the most characteristic abilities of man: the ability to communicate with their peers.

14 to 17, one of the three children already recognizes that he goes to AI to talk about personal problems or important conclusions, According to a report released on Wednesday by Point and GAD3. Some families even recognize the investigation, and if you are able to convert this tool into any fathers and mothers, it is to advise personal results. “When AI is formed, Soon it will be fully integrated into mobile phones and they will keep it in hand and they will talk about it. It is very good for some things, but you can be more personally and it will be a problem, ”he warns 20 Minutos Elena Martinez, director of the endless company. He says that the way to prevent it is “developing human abilities” and training adolescents in critical thinking. “They know the questions, AI is not a friend, it’s not a person … it is a machine,” he added.

According to research, 85% of adolescents use AI at least once a week, however, six out of ten students and teachers find the exercise in the use of this technology in schools. There is an obvious gap that experts are asking to address before it becomes late, as is the case with the Internet and social networks. “We can leave the hand, but we are at the right time and bring what we have learned with social networks. This can be prevented with a lot of digital literacy, but it is necessary to start … if not, this can happen to us, “Martinez points out.

Develop personality with a machine

There is another risk of handing out all the results in a machine: they oxidize or develop their cognitive and social abilities, but on the basis of other standards. “We want to think that When personality develops in adolescence, it is done in the family, but also in the group of peers. In addition, all the brain circuits that allow the autobiography memory to allow inward work. They begin to question who I am, how I am, and where I come from … Therefore, it is very important for them to leave those places to do that work, ”says this newspaper Sylvia Alava, health and educator.

“To provide information, AI is very good, but sometimes we still need the emotional connection”

According to Elena Martinez, many mothers have told them how to tell their children to organize their birthday party or how to announce the woman they want. A prioroiThey are insignificant problems, but the problem arises when using AI to solve personal concerns or asceticism. “There are things that the machines cannot do. A machine is not going to hug you and it may be what you need at that time. It is going to be given to you, a friend … AI is very good, but sometimes we need the emotional communication we need, we cannot lose it, ”says psychologist Sylvia Alava.

Jose Louis Killan, the general director and the author of the book Francisco de Vittoria (UFV), agree with him Human blooming in the era of AI. “There are already many sites. They offer all kinds of suggestions. Psychology too. There is a risk for it. It is already found in Japan, where people areolated themselves as they areolate themselves. We cannot forget that people have to be in touch … There are studies showing that people with great satisfaction at the end of their lives have good relationships, ”he says.

In conclusion, these people get in the hands of digital sites, not knowing who has developed that technology or who is managed. “Parents and educators have a responsibility to teach them how to question, what to get in, and there is nothing. If not, at such an early age, they run the risk of locking themselves, mainly to live in a parallel reality Then it cannot be associated with the world. If they took it from us what did we leave? “He asks. To the UFV director, losing those skills refers to the loss of part of the essence of man, according to his opinion,” creativity. “If so, if we have people in an environment where we are not creative, again, and they are unrelated to them, we kill them, so to speak,”

Unwanted losing machine

As for Sylvia Alawa, this matter may also be related The issue of unwanted lonelinessIt already affects three of four young people in Spain. According to the Spanish Pediatric Association, mental disorders in children and adolescents have increased by 47%. “We know that one of the best factors to protect mental health is that we have a good support network. Whatever the need is, that is a bit of that human factor. This is not AI”. Along with this, the psychologist illustrates the need to make a mistake for the minority, and know how to properly recognize the emotions and express them.

“What is important is that part of the training they don’t have if they can. We must do an educational work in the community, Because we see there are so many boys who have real fear to feel unpleasant feelings. It learns to live, manage and create strategies. An AI can tell you that it is very good to create diaphragm breathing for tension, for example, but if the boy does not do it, it will not help. Obviously, this does not apply to the events of mental disorders, but you have to understand that you are alive at a certain time, ”he concludes.

According to Gillan, there are also promoted regulations to control the inappropriate use of these technologies. For example, the government gave a green light The Protection Act of Minority In the contemplating digital field, amidst many activities, up to two years of imprisonment for lying with artificial intelligence images of sexual content. However, he maintains, “A personal issue”, there is also a personal responsibility. “This is a problem in home education, homes, families, schools and universities. Everything does not have to be confirmed. It is wrong to go to the rhythm of the technical institutions’ sales.”